Automating Blob Cleanup with Azure Storage Lifecycle Management Policies

A blog for all things Azure! Whether you're a developer or IT pro, explore tips, trends, and hands-on guides to optimize and transform your workflow with Microsoft Azure's powerful capabilities. Join me while I learn new features and technologies from code to infrastructure.

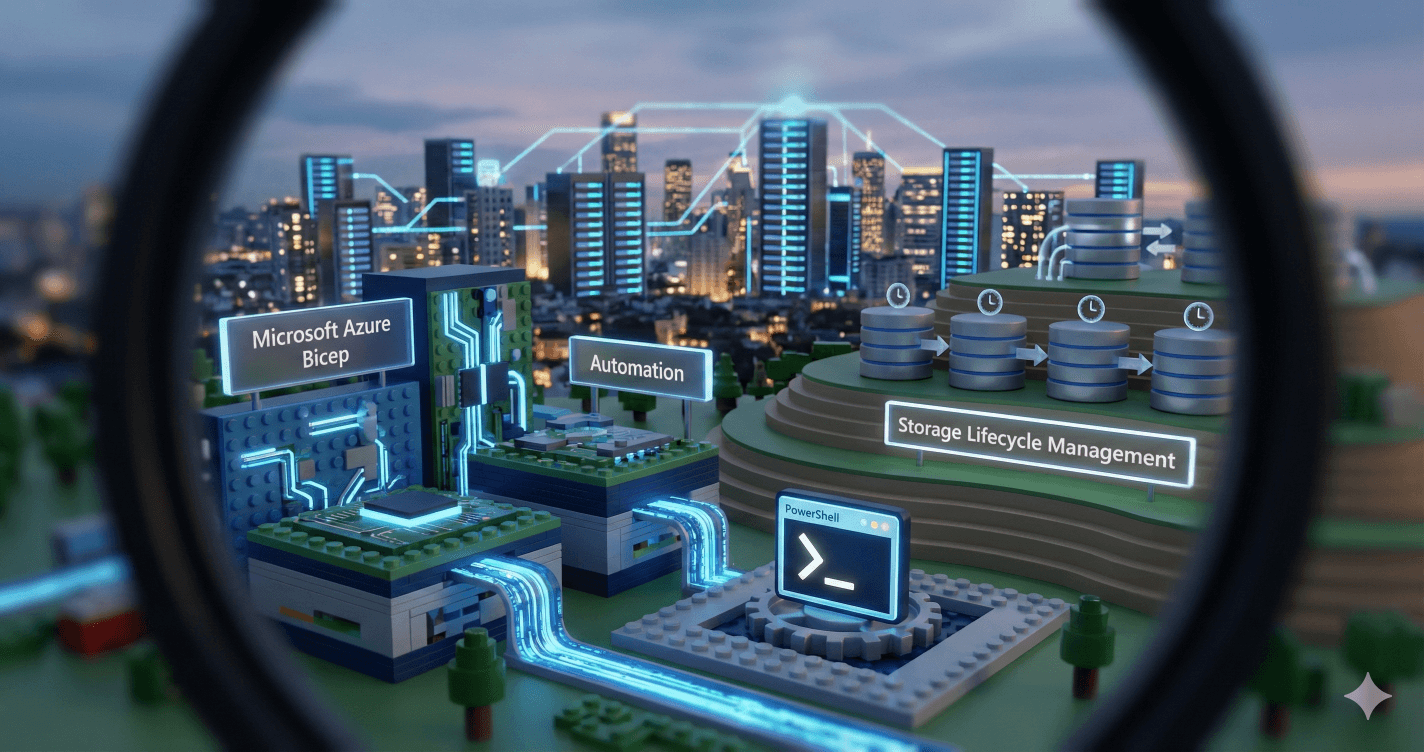

Sometimes the solution you're building doesn't need another Function App, another timer trigger, or another piece of custom code to maintain. Azure Storage has a built-in lifecycle management engine that can handle age-based cleanup policies entirely within the service—no compute, no secrets, no runtime to monitor. This post walks through how lifecycle policies work, what you can do with them, and how to deploy them cleanly using Bicep.

Reference: Azure Blob Storage Lifecycle Management

What Are Lifecycle Management Policies?

A lifecycle management policy is a JSON ruleset attached to a Storage Account that tells Azure, "Here's what I want you to do with blobs that match certain criteria." The Azure Storage service evaluates these rules periodically (timing is internal and service-managed) and applies actions like deleting old blobs, moving them to cooler storage tiers, or cleaning up versions and snapshots.

You define:

Filters: Which blobs to target (by type, container prefix, blob name prefix)

Actions: What to do with them (delete, tier to Cool/Archive, etc.)

Thresholds: Time-based conditions (days since creation, modification, or last access)

The policy runs automatically, transparently, and scales with your data—no servers, no invocation logs to parse, no scaling concerns.

Anatomy of a Lifecycle Rule

Here's the simplest possible rule: delete block blobs older than 7 days.

{

"rules": [

{

"name": "DeleteOldBlobs",

"enabled": true,

"type": "Lifecycle",

"definition": {

"filters": {

"blobTypes": ["blockBlob"]

},

"actions": {

"baseBlob": {

"delete": {

"daysAfterModificationGreaterThan": 7

}

}

}

}

}

]

}

This rule:

Targets all block blobs (

blobTypes)Deletes base blobs (

baseBlob.delete) ifLastModifiedis more than 7 days ago-Runs periodically (service-managed schedule)

Filtering by Container

In production, you rarely want to apply a policy to every blob in the account. The prefixMatch filter lets you target specific containers or blob prefixes.

"filters": {

"blobTypes": ["blockBlob"],

"prefixMatch": ["signin", "audit", "logs"]

}

This matches:

signin/anythingaudit/anythinglogs/anything

Blob paths are virtual (Azure Storage is flat), so signin/entra/20251123.json matches the signin prefix. You can be more granular: "prefixMatch": ["signin/entra"] would only target that subtree.

Azure allows up to 100 rules per Storage Account. By consolidating multiple containers into a single rule with an array of prefixes, you stay well under quota and keep the policy maintainable.

Handling Snapshots

Blobs can have snapshots (point-in-time immutable copies). If you delete a base blob but leave snapshots orphaned, they continue consuming storage and cost. Lifecycle policies have a dedicated snapshot action:

"actions": {

"baseBlob": {

"delete": {

"daysAfterModificationGreaterThan": 7

}

},

"snapshot": {

"delete": {

"daysAfterCreationGreaterThan": 7

}

}

}

Note the difference:

Base blobs use

daysAfterModificationGreaterThan(when the blob was last written)Snapshots use

daysAfterCreationGreaterThan(when the snapshot was created, which is immutable)

This ensures snapshots older than 7 days are purged alongside their parent, keeping storage tidy.

Tiering Before Deletion (Cost Optimization)

If your access patterns allow, you can tier blobs to cooler storage (Cool or Archive) before deleting them entirely. This reduces storage costs while retaining data for a grace period.

"actions": {

"baseBlob": {

"tierToCool": {

"daysAfterModificationGreaterThan": 7

},

"tierToArchive": {

"daysAfterModificationGreaterThan": 30

},

"delete": {

"daysAfterModificationGreaterThan": 90

}

}

}

Lifecycle:

Day 7: Blob moves to Cool tier (lower storage cost, higher access cost)

Day 30: Blob moves to Archive tier (lowest storage cost, high rehydration cost)

Day 90: Blob deleted permanently

This staged approach is common in compliance scenarios where you need to retain data for auditing but can tolerate slower access as it ages.

Reference: Access tiers for blob data

Blob Versioning and Lifecycle Policies

If versioning is enabled on your Storage Account, every overwrite creates a new version. Old versions can accumulate quickly. Lifecycle policies support version-specific actions:

"actions": {

"version": {

"delete": {

"daysAfterCreationGreaterThan": 30

}

}

}

This deletes non-current versions older than 30 days, keeping only the latest version and recent history.

Reference: Blob versioning

Last Access Time Tracking

Azure Storage can optionally track when each blob was last read (requires enabling access time tracking on the account). Policies can then delete blobs that haven't been accessed recently, even if they're modified frequently:

"actions": {

"baseBlob": {

"tierToCool": {

"daysAfterLastAccessTimeGreaterThan": 30

},

"enableAutoTierToHotFromCool": true,

"delete": {

"daysAfterLastAccessTimeGreaterThan": 90

}

}

}

This is powerful for log archives or cold data lakes where "staleness" means "nobody's reading this anymore" rather than "nobody's writing to this anymore."

Reference: Optimize costs by automatically managing the data lifecycle

Deploying with Bicep

Bicep lets you version, test, and deploy lifecycle policies as infrastructure-as-code. Here's a minimal module:

// lifecyclePolicy.bicep

param storageAccountName string

param containerPrefixes array

param retentionDays int = 7

resource storageAccount 'Microsoft.Storage/storageAccounts@2025-06-01' existing = {

name: storageAccountName

}

resource managementPolicy 'Microsoft.Storage/storageAccounts/managementPolicies@2025-06-01' = {

name: 'default'

parent: storageAccount

properties: {

policy: {

rules: [

{

name: 'DeleteOldBlobs'

enabled: true

type: 'Lifecycle'

definition: {

filters: {

blobTypes: ['blockBlob']

prefixMatch: containerPrefixes

}

actions: {

baseBlob: {

delete: {

daysAfterModificationGreaterThan: retentionDays

}

}

snapshot: {

delete: {

daysAfterCreationGreaterThan: retentionDays

}

}

}

}

}

]

}

}

}

Reference: Microsoft.Storage/storageAccounts/managementPolicies

Deploy with environment-specific parameters:

New-AzResourceGroupDeployment `

-ResourceGroupName "rg-prod" `

-TemplateFile ./infra/main.bicep `

-TemplateParameterFile ./infra/parameters.prod.json

Parameter files let you vary container lists and retention periods across dev/test/prod without duplicating Bicep code.

Reference: New-AzResourceGroupDeployment

Multi-Environment Strategy

Structure parameter files like this:

parameters.dev.json (aggressive cleanup for fast iteration):

{

"storageAccountName": { "value": "stdevblobcleanup001" },

"containerPrefixes": { "value": ["testA", "testB"] },

"retentionDays": { "value": 2 }

}

parameters.prod.json (conservative retention):

{

"storageAccountName": { "value": "stprodblobcleanup001" },

"containerPrefixes": { "value": ["signin", "audit", "logs"] },

"retentionDays": { "value": 90 }

}

Same Bicep template, different behavior per environment. Version control tracks changes; CI/CD pipelines enforce review gates before production deployments.

When to Use Lifecycle Policies vs Custom Code

Use lifecycle policies when:

Retention logic is purely time-based (days since modification/creation/access)

You want zero operational overhead (no Functions, no logs to monitor)

Filters are simple (blob type, container prefix, blob name prefix)

Tiering and deletion are sufficient actions

Use custom code (e.g., Azure Functions) when:

You need filename parsing or complex business logic (e.g., "delete if filename matches pattern X")

Per-run reporting is required (deletion counts per container logged to App Insights)

You need conditional behavior (demo mode vs real mode, dry-run logic)

Integration with external systems (send notifications, update databases, emit custom metrics)

Lifecycle policies are elegant for straightforward retention; custom code is the escape hatch for everything else.

Testing Your Policy

Before enabling a policy in production, seed test data with the provided Seed-StorageContainers.ps1 script:

./scripts/Seed-StorageContainers.ps1 `

-StorageAccountName "stdevblobcleanup001" `

-ResourceGroup "rg-dev" `

-PastDays 10 `

-FutureDays 2

This creates blobs with filenames encoding timestamps (e.g., signin/entra/20251113000000.json). Deploy your policy with a short retention window (retentionDays: 2) and check after the next daily evaluation cycle (typically within 24 hours) whether old blobs were deleted.

Important caveat: Lifecycle policies evaluate LastModified or creation time, not filename content. The seeding script creates blobs with timestamps in their filenames, but all blobs will have LastModified set to the upload time. To test retention policies effectively, you need to wait for blobs to age naturally—there is no supported way to backdate blob timestamps. For rapid testing, use very short retention periods (1-2 days) and verify policy execution after 24-48 hours.

Validating the Policy in the Azure Portal

After deployment (Bicep or ARM), you can confirm the lifecycle policy configuration and observe its effects directly in the Azure Portal.

Verify Rule Definition

Navigate to the Storage Account.

In the left menu, under Data management, select Lifecycle management.

Use the List view tab to confirm your rule appears (e.g.,

DeleteOldBlobs) and its status is Enabled.

- Switch to Code view to inspect the JSON that the portal stored. It should reflect the Bicep deployment (prefixes,

daysAfterModificationGreaterThan, snapshot settings).

If the rule was just deployed or modified, allow up to 24 hours for the first evaluation cycle (per Microsoft guidance). The presence of the rule in the portal does not mean deletions have already occurred.

Reference: Configure a lifecycle management policy (Azure Portal)

Monitoring and Observability

Lifecycle policy executions don't emit logs to Application Insights or Azure Functions invocation history. To track deletions:

Storage Analytics Logs: Enable logging on the Storage Account; deletion operations appear in

$logscontainerAzure Monitor Metrics: Track container-level metrics (blob count, capacity)

Log Analytics Integration: Route diagnostic logs to a Log Analytics workspace and query

StorageBlobLogs

Reference: Monitor Azure Blob Storage

Example Kusto query for deletion tracking:

StorageBlobLogs

| where OperationName == "DeleteBlob"

| where TimeGenerated > ago(7d)

| summarize DeletionCount = count() by ContainerName = split(Uri, "/")[3]

| order by DeletionCount desc

Extending the Bicep Module

The lifecycle policy module can grow with your needs. Add parameters for:

Tiering actions: Expose

tierToCool,tierToArchivewith separate thresholdsVersion cleanup: Add

version.deleteaction if versioning enabledMultiple rules: Loop over an array of rule definitions for complex scenarios

Conditional deployment: Use Bicep conditionals to deploy policies only if certain features are enabled (e.g., versioning, soft delete)

Bicep's modular design keeps the core template simple while allowing opt-in complexity.

Reference: Bicep Best Practices

Wrapping Up

Azure Storage Lifecycle Management Policies are the right tool when retention is time-based and you value operational simplicity over granular control. Deploy them with Bicep, test with realistic data, and let the service handle the rest. Your infrastructure stays declarative, your storage costs stay predictable, and you avoid the operational overhead of maintaining yet another background job.

For scenarios demanding filename parsing, per-run reporting, or conditional logic, custom code remains the escape hatch—but for most cleanup workloads, the built-in lifecycle engine is enough. 🚀